Fine-Tuning Qwen2.5-VL on Your Own Images using LLaMA-Factory

Hi, I'm Jiajun Wang, a researcher focusing on the application of cutting-edge AI in engineering. This blog is my digital garden.

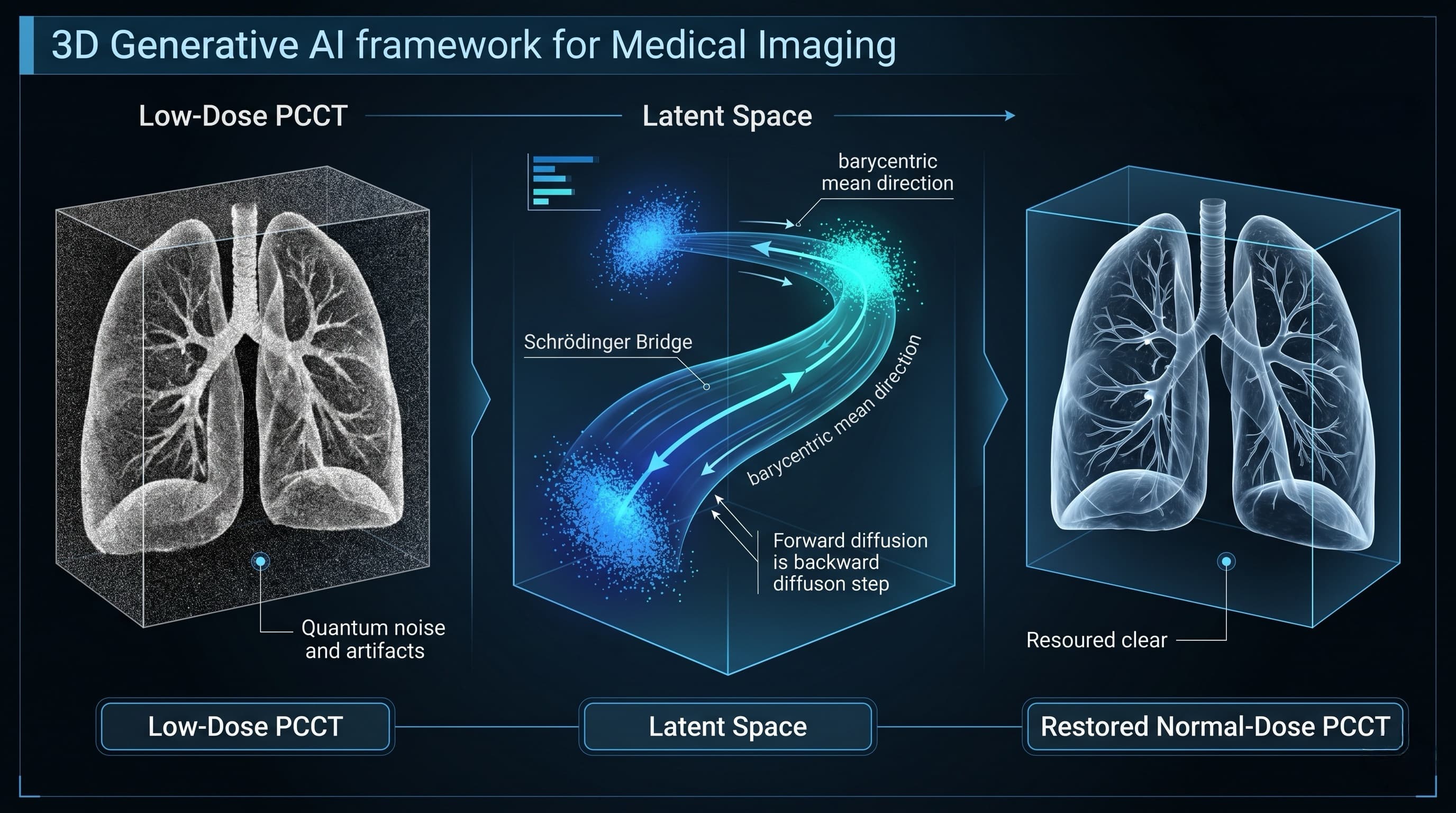

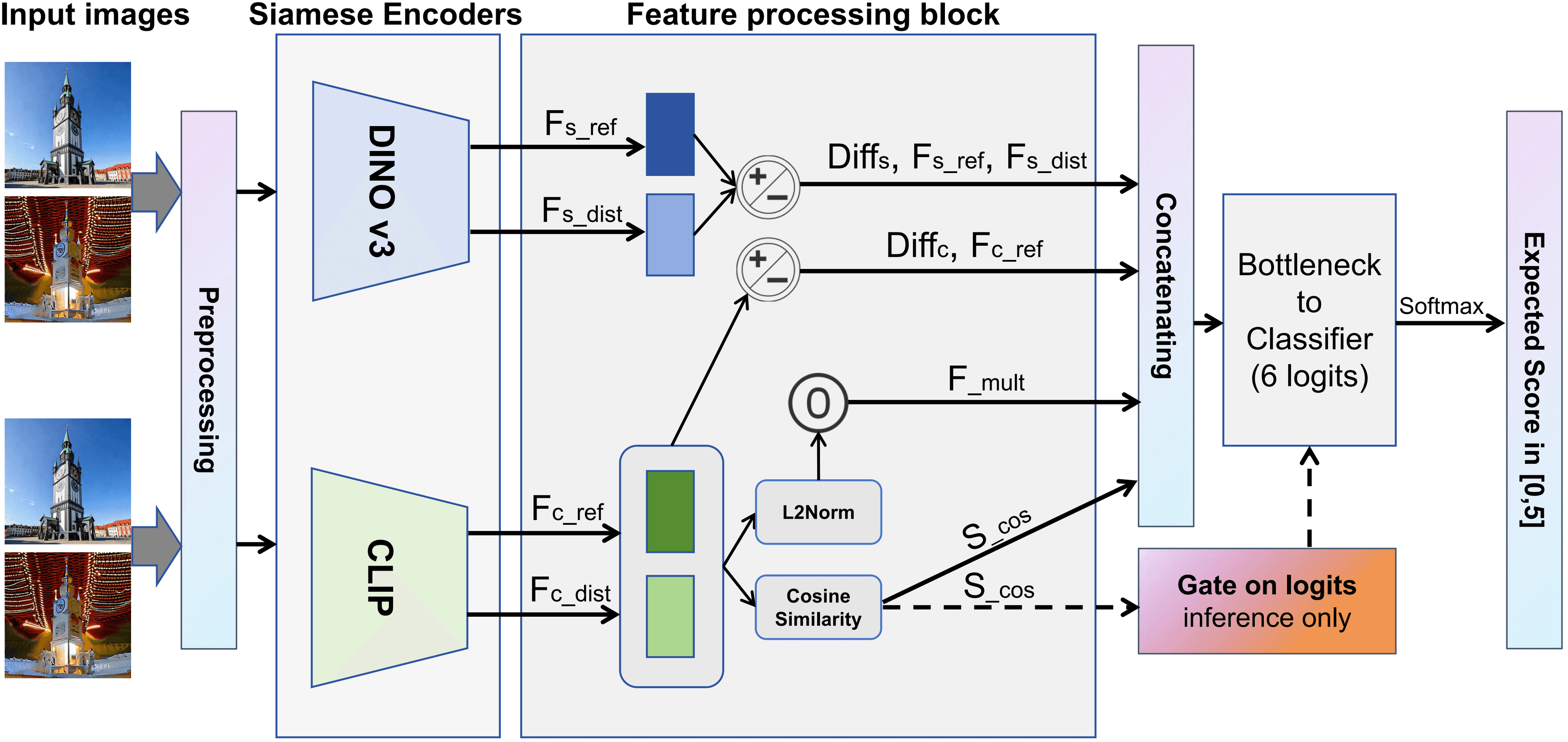

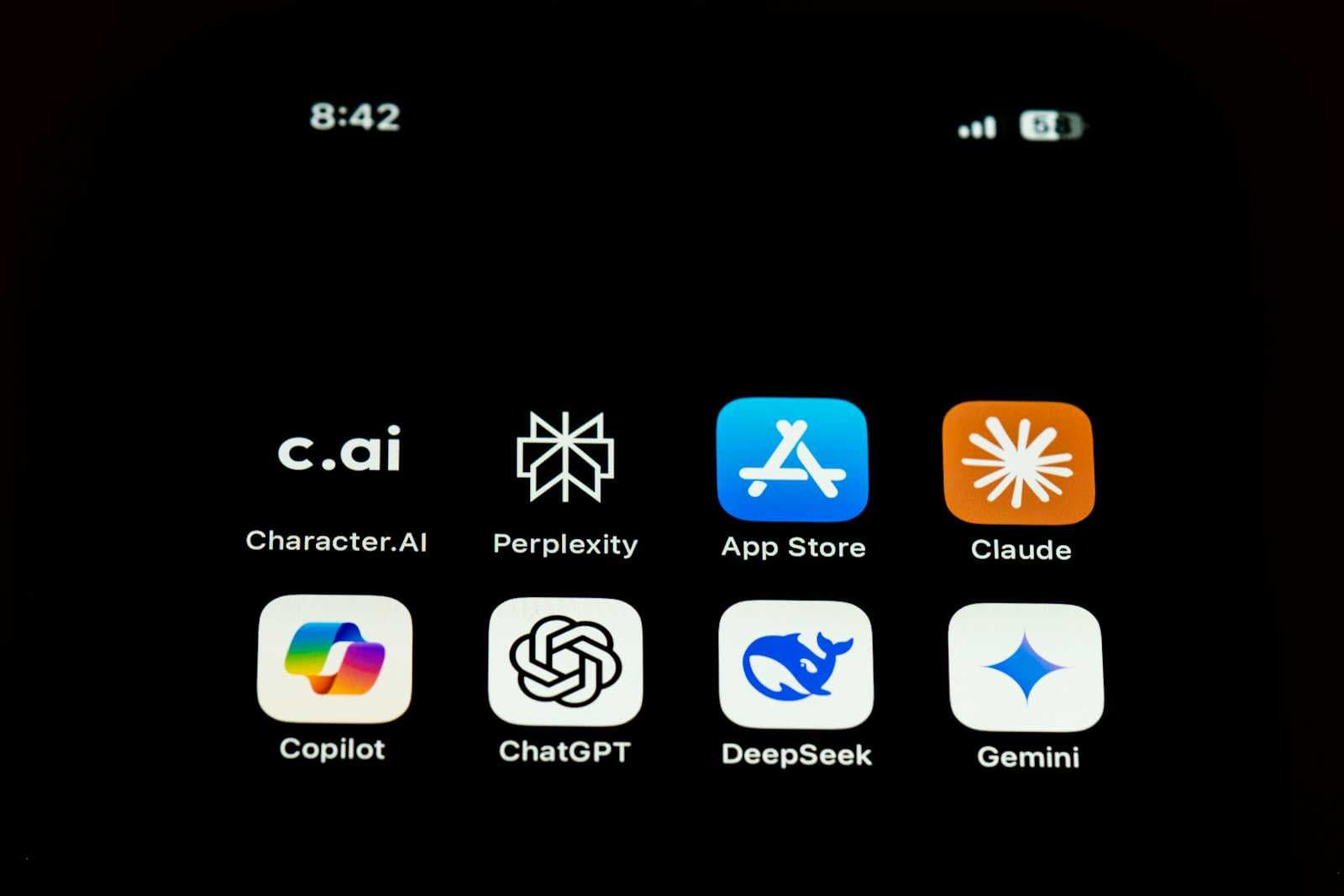

The world of Large Language Models (LLMs) is evolving rapidly into Vision-Language Models (VLMs). Models that can see and understand images—like Qwen2.5-VL—are game changers for tasks like OCR, medical imaging analysis, and visual agents.

However, fine-tuning these multimodal models has historically been a complex engineering nightmare.

Enter LLaMA-Factory.

This unified framework makes fine-tuning state-of-the-art models accessible to everyone. In this tutorial, I will guide you step-by-step through fine-tuning Qwen2.5-VL-7B-Instruct on a custom image dataset. Whether you are a researcher or a hobbyist, this guide will take you from an empty folder to a working custom VLM.

Prerequisites

Before we begin, ensure you have:

- Hardware: An NVIDIA GPU (24GB VRAM recommended for 7B models using LoRA; A100/H100 is ideal for faster training).

- OS: Linux (Ubuntu/CentOS) or Windows via WSL2.

- Python: Version 3.10 or higher.

Step 1: Environment Setup

We need a clean environment with the specific dependencies for Qwen's visual processing capabilities.

- Create a Conda environment:

conda create -n qwen_vl_ft python=3.10

conda activate qwen_vl_ft

- Clone LLaMA-Factory:

git clone https://github.com/hiyouga/LLaMA-Factory.git

cd LLaMA-Factory

- Install dependencies:

This step is crucial. Qwen2.5-VL requires

qwen-vl-utilsto handle image inputs.

pip install -e .[metrics]

pip install qwen-vl-utils

(Optional but Recommended: Install Flash Attention 2 for faster training if you have an Ampere/Ada GPU like A100/RTX3090/4090):

pip install flash-attn --no-build-isolation

Step 2: Prepare Your Multimodal Dataset

Data preparation for VLMs is slightly different from text-only models. You need to link your text instructions to specific image files.

1. Organize your images

Create a folder named data/my_images inside the LLaMA-Factory directory and put all your training images there (e.g., .jpg or .png files).

2. Create the JSON file

Create a file named data/my_vl_data.json. The format should include an images list containing the path to the image.

Example Format:

[

{

"instruction": "Analyze this image and describe the defects found.",

"input": "",

"output": "The image shows a crack in the metal surface located at the top left corner.",

"images": [

"data/my_images/defect_001.png"

]

},

{

"instruction": "What is the text written on the sign?",

"input": "",

"output": "The sign says 'Do Not Enter'.",

"images": [

"data/my_images/sign_045.jpg"

]

}

]

Note: Ensure the image paths are relative to the LLaMA-Factory root directory or absolute paths.

3. Register the dataset

Open data/dataset_info.json and add your new dataset definition:

"my_vl_dataset": {

"file_name": "my_vl_data.json",

"formatting": "alpaca",

"columns": {

"prompt": "instruction",

"query": "input",

"response": "output",

"images": "images"

}

}

Step 3: Download the Base Model

For stability, download the model weights manually before training.

pip install huggingface_hub

# Download Qwen2.5-VL-7B-Instruct

huggingface-cli download Qwen/Qwen2.5-VL-7B-Instruct --local-dir models/Qwen2.5-VL-7B

Step 4: Configure and Run Training (LoRA)

We will use LoRA (Low-Rank Adaptation). This is efficient and perfect for VLMs. We need to create a YAML configuration file.

Create train_qwen25_vl.yaml in the root folder:

### Model Configuration

model_name_or_path: models/Qwen2.5-VL-7B

template: qwen2_vl # CRITICAL: Must use 'qwen2_vl' for correct tokenization

trust_remote_code: true

### Method Configuration

stage: sft # Supervised Fine-Tuning

do_train: true

finetuning_type: lora

lora_target: all # Qwen-VL benefits from training all linear layers

lora_rank: 16

lora_alpha: 16

### Dataset Configuration

dataset: my_vl_dataset # Your custom dataset name

cutoff_len: 2048 # VLMs need longer context for image tokens

overwrite_cache: true

preprocessing_num_workers: 16

### Training Configuration

output_dir: saves/qwen2.5-vl/lora/sft # Save path

logging_steps: 10

save_steps: 100

plot_loss: true

overwrite_output_dir: true

### Hyperparameters

per_device_train_batch_size: 4 # Adjust based on VRAM (Try 2 if OOM)

gradient_accumulation_steps: 4

learning_rate: 1.0e-4

num_train_epochs: 5.0

lr_scheduler_type: cosine

warmup_ratio: 0.1

bf16: true # Use pure bf16 for A100/3090

flash_attn: fa2 # Use Flash Attention 2

Start the Training: Run the following command:

llamafactory-cli train train_qwen25_vl.yaml

LLaMA-Factory will now handle the complex task of encoding your images into visual tokens and training the LoRA adapter to understand them.

Step 5: Inference (Testing Your Model)

Once training finishes, let's see if the model learned your task. You can use the CLI or the WebUI.

Using the WebUI (Easiest Method):

llamafactory-cli webui

- Go to the Chat tab.

- Select the Checkpoint:

saves/qwen2.5-vl/lora/sft. - Upload an image in the chat box.

- Type your instruction and see the magic!

Using CLI:

llamafactory-cli chat \

--model_name_or_path models/Qwen2.5-VL-7B \

--adapter_name_or_path saves/qwen2.5-vl/lora/sft \

--template qwen2_vl \

--finetuning_type lora

Step 6: Merge and Export (Optional)

If you want to deploy your model (e.g., using vLLM or Ollama), you need to merge the LoRA weights into the base model.

Create merge_vl.yaml:

model_name_or_path: models/Qwen2.5-VL-7B

adapter_name_or_path: saves/qwen2.5-vl/lora/sft

template: qwen2_vl

finetuning_type: lora

export_dir: models/Qwen2.5-VL-FinelyTuned

export_size: 5

export_device: cpu # Use CPU for merging to save VRAM

Run the export:

llamafactory-cli export merge_vl.yaml

Conclusion

Fine-tuning multimodal models used to require specialized knowledge of visual encoders and projector layers. LLaMA-Factory abstracts this away, allowing you to treat images just like another data input.

By following this guide, you have successfully fine-tuned Qwen2.5-VL, one of the most powerful open-source VLMs available, on your own custom data.

Key Takeaways:

- Dependencies matter: Don't forget

qwen-vl-utils. - Data format: Ensure your JSON correctly points to your image paths.

- Template: Always use

template: qwen2_vlfor this specific model family.

Happy Fine-Tuning!

If you found this tutorial helpful, please share it with your community!